Back-end Web Performance Consultant

TTFB, the Glass Ceiling of Performance

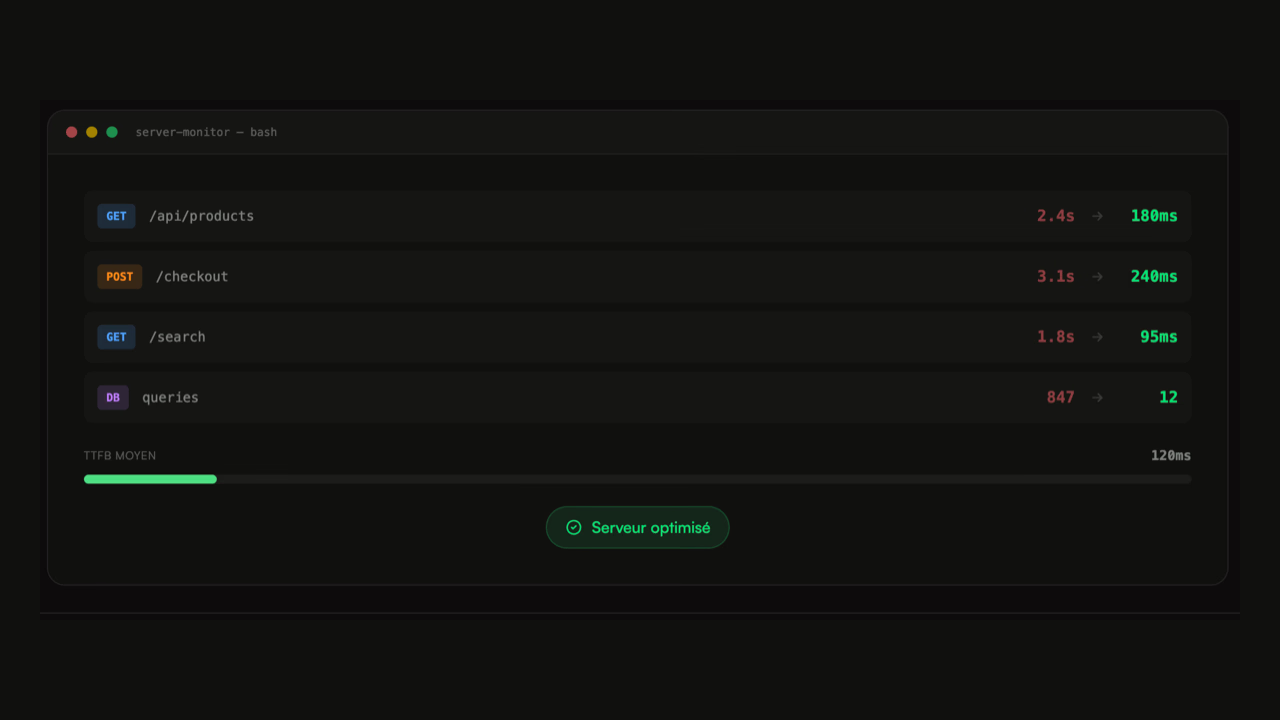

Time To First Byte sets the floor for the entire rendering chain. The browser can't start anything until the server has responded. A TTFB of 800 ms means that even with a perfectly optimized front-end, the LCP can't go below 1.5 to 2 seconds.

This is a regular observation during audits: teams invest weeks in lazy loading and image compression, while the real bottleneck is a server response time of 1.2 seconds on category pages. Until the back-end is addressed, front-end optimizations have a limited effect.

Common Causes

Back-end performance issues are similar from one project to another. N+1 queries that turn the display of a 50-product catalog into 200 database calls. Application cache configured but invalidated too often, or not at all. Synchronous calls to third-party APIs — ERP, search engine, stock service — that block rendering until they have responded.

Instrumentation is the starting point. Without visibility into what's happening inside a request, it's guesswork. Dynatrace, Datadog, NewRelic — the tool doesn't matter much, what's important is being able to trace an HTTP request end-to-end and see exactly where time is consumed. On an e-commerce checkout, the difference between "the server takes 8 seconds" and "the server takes 8 seconds, 5 of which are waiting for the ERP response" completely changes the approach.

Cache and Invalidation Strategy

Cache is probably the most powerful and underutilized lever in back-end. A well-configured page cache can reduce TTFB by 10 times on high-traffic pages. But the complexity lies in invalidation — when a price changes, when a product goes out of stock, when a promotion starts.

Most implementations I see are either too aggressive (5-minute cache serving outdated prices) or too conservative (global invalidation with every change, which is equivalent to having no cache). An effective strategy is granular — it precisely invalidates what has changed, not the entire catalog.

Performance and Infrastructure Costs

A back-end that consumes less CPU per request needs fewer servers to handle the same traffic. On cloud architectures billed by usage, code optimization directly impacts the bill. Optimized SQL queries, effective caching, parallelized external calls — it adds up. The gain in user performance is almost a bonus compared to the cost reduction.

Ready to boost your performance?

Data 2023-2025